Roverbot

One-eyed line following robot made from Lego

Overview

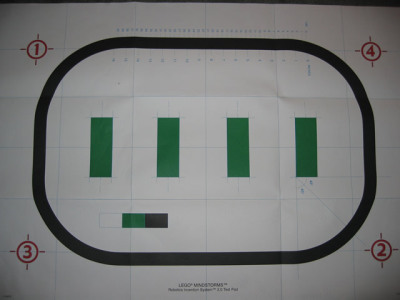

Roverbot is a robust robot body that is easily adapted for just about any simple indoor mobile application you can think of. When I got my Lego kit, the first application I wanted to try was to give Sandwich some competition in the line following arena.

This page tells a bit about the Roverbot base, the software program I wrote, and a head-to-head comparison with Sandwich in both sensor design and line following performance.

Roverbot Base

The Lego Mindstorms Robotic Invention System (RIS) 2.0 comes with instructions for building the Roverbot base. It is a compact design that’s pretty sturdy. The mount point for the sensors is modular so you can easily swap a variety of touch sensors and light sensors. Similarly, the drive train is modular, making it simple to use wheels, walking legs, or tank-like treads.

Video Footage

If you listen real close, you can hear Ollie chomping in the background :-)

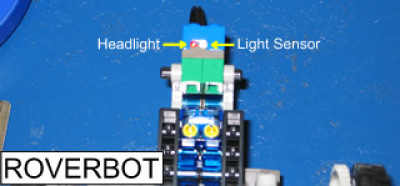

For my line following robot, I chose to use the wheels for the drivetrain, and the light sensor positioned in the front of the bot. The assembly took about 1 hour, following the instructions provided with the kit. It could have taken less time, but I was like a kid in a candy store and sat there admiring each piece of Lego. That, plus the fact that my kit arrived in the mail the day before I was leaving for a vacation, so I also had to pack.

Line-Following Software

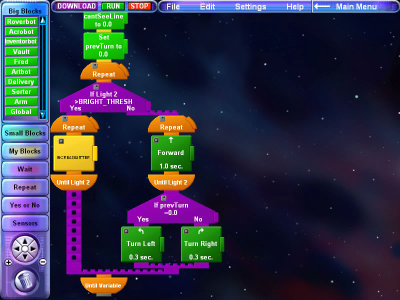

Once I finished assembling the Roverbot base, the first thing I did was to play around with the programming language that comes with the Lego kit. It is so easy to use, you really don’t need any prior programming experience. So I started out with some simple behaviors like drive forward, simple turns, some dancing. So to challenge myself I figured I’d write a program for line following.

Algorithm

My Roverbot uses a single light sensor for line following, so I had to come up with a technique that could overcome that limitation. I call the algorithm “Line Addict”. The basic idea is that my bot is “addicted” to the color of the line.

If he can see the line, then his addiction is satisfied, so he just drives forward. If he can’t see the line, then he gets the jitters, slowly at first, and then increases his jitter until he can see the line. And that’s basically it, although I did add in a slight optimization to remember what direction the most recent jitter ended on, and use that as the starting move for the next time he jitters.

This works well in turns (especially on an oval where all turns are in the same direction), but on straight-aways you can see in the video what the effect is.

Roverbot vs. Sandwich

So you’ve got two line followers. Just how long can you resist racing them against one another? For me its not too long. Time to race Roverbot against Sandwich.

| Roverbot vs. Sandwich Race - .wmv (852 KB) | .avi (3.92 MB) |

Different Sensors

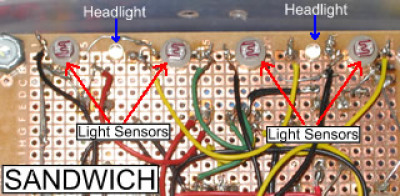

For line following, Sandwich uses four CdS photoresistors. Wired in parallel, there are two for the left and two for the right, and as Sandwich drives, he straddles the line. In addition, Sandwich has two white visible-light LEDs for headlights. These shine on the floor & line, and the photoresistors sense the reflected light.

Roverbot in this configuration uses a single Lego light sensor. This has a red visible-light LED for illumination, and a single light sensor (I’m not sure if its a CdS or a photo-transistor). So while Sandwich straddles the line, Roverbot tries to keep his single eye on top of the line.

Different Techniques

These different sensor layouts lead to different line following techniques. Sandwich starts off with his left sensor array facing the floor to the left of the line, and his right sensor above the right side of the line. If he sees equal brightness on both sides, he drives straight. As the line curves to one side, that side will become darker. So Sandwich’s left sensors will read a different value than the right side, and Sandwich knows which direction to turn in.

Roverbot, the cyclops, does not have that luxury. When his single light sensor goes off of the line, he does not have another sensor to compare to, so he does not know what direction he must turn in to find the line again. And that’s what leads to the jittering algorithm I devised for him to scan back and forth until he finds the color of the line again.

See for yourself which sensor arrangement is more effective. Bear in mind that a variety of factors impact line-following speed, but the sensor limitations drive the algorithm in this situation and that is the critical factor for performance here.

In Conclusion

In conclusion, I’m overly happy with my Lego RIS 2.0 kit. The Roverbot base is easy to build and seems robust enough for use in some more sophisticated applications. The programming environment was easy enough for me to get started quickly. I do want to try out some other programming options like NQC or Java, but that can wait until I find something I can’t do with the standard language.

My line-following Roverbot is only mediocre as a line-follower. In the current version of the software, it’s limited to following the black line that’s on the race track that comes with the Lego kit, so a future upgrade would be to enhance the initialization sequence to figure out what color line to follow. Also, once the bot looses the line and starts to jitter, if the jitter keeps going long enough that he’s swinging 90 degrees to either side, then he’s lost the line. An enhancement would be to have him try some maneuvers to find the line that’s out of “swivel range”.